For most large enterprises, enforcing security controls like multi-factor authentication (MFA) is a high priority project. After all, Microsoft famously found that “MFA can block over 99.9 percent of account compromise attacks.” In exchange for such a massive gain in security, enforcing MFA is a no-brainer.

At the same time, enforcing MFA across all enterprise resources is a challenging and time-consuming task. This is especially true when there are large numbers of end-users, and even more endpoint devices, all spread around the globe. Many users are likely working from home and some may be using a personal device to access enterprise resources.

Traditionally, security controls like MFA and device authentication are enforced at the application layer. This approach requires modifying existing applications and manually installing security software on each and every server, one by one. For large enterprises with thousands of servers— email servers, web applications, file servers, databases, and so on— it’s clearly a massive undertaking.

This blog post will outline a new approach to enforcing security controls that doesn’t require any custom code or modifications to servers. The trick is to enforce the controls at the transport (or session) layer, before the client establishes a connection with the server at the application layer. Using this approach, the downstream applications remain unchanged but still benefit from the security controls. But before we get to that, let’s start from the top.

Types of Security Controls

MFA is arguably the most well-known security control but other important controls exist as well. A properly implemented IAM/PAM system will allow for the addition of new security controls via the click of a button, instead of requiring software changes. Below is a non-exhaustive list of some of the security controls an enterprise should seek to implement:

Multi-Factor Authentication (MFA) – Require end-users to authenticate through at least two factors. End-users should provide something they know (such as a password or an answer to a security question), plus either something they have (such as a physical object like a key card or USB) or something they are (biometric authentication like a fingerprint scan or facial recognition scan).

Device Authentication – In addition to authenticating the end-user, authenticate the device that the user is using and ensure that it is valid for the request being made and up-to-date with security patches. This is especially important when users are accessing systems remotely (e.g., working from home).

Just-in-Time Access (JIT) – Just because a user has access to a resource, doesn’t mean they should be allowed to access the resource any time they want. Instead, access should only be granted when there is a need to do so. For example, system administrators may be allowed to SSH to production servers, but only when a ticket request is approved to make a change to the system.

Approval Workflows – Access to systems is only granted when a quorum of approvers approve the request. If any approver rejects the request, access is not granted and a system alert is generated.

Notifications – Access to resources results in notification messages sent to appropriate personnel, allowing for early detection of anomalous behavior. These notifications can be sent via email or any other enterprise communication system. Of course, notifications can quickly turn into spam so these should be configured for only the required resources.

Geolocation – Allow end-users to access enterprise networks and resources only if they are requesting access from certain locations and during a specified time frame, such as normal working hours. Certain geolocations can and should be restricted outright if no authorized end-users reside in those regions.

Survey The Field

The first step in enforcing security controls on all resources across the enterprise is taking inventory of all of the end-users (and the permissions they should have), as well as all of the servers (and the resources they provide) in your environment.

There is a plethora of resources to consider: email servers, file servers, web applications, production systems, source code repositories, build servers, databases, and more. It’s essential to create an exhaustive list of every server on the enterprise network and know what resources they are providing to clients.

Implement Key-Based Authentication

Different resources are accessed from different clients, using different protocols. A web browser uses HTTPS to access a web application, a remote desktop client uses RDP to connect to a remote system, an IDE may use TLS or SSH to commit code to a source repository, and so on.

While there are a wide variety of clients, protocols, and resources, they all have one thing in common: they support key-based authentication. Key-based authentication offers a number of advantages over traditional username/password authentication. For starters, end-users can’t be relied upon to create strong passwords. Weak passwords make it relatively easy for attackers to gain unauthorized access to networks and resources through basic social engineering and rudimentary brute force attacks.

Furthermore, passwords are shared secrets, meaning that the server must keep a database of all users’ passwords (or at least the hashes of the passwords) in order to authenticate the users. If an attacker gains access to this trove of credentials, all of the accounts could be compromised.

Compare this to key-based authentication, which uses unshared secrets. In this process, one party must prove ownership of a private key via a digital signature, but they never reveal or share the private key with the verifier, hence the term unshared secret. This authentication method provides a much higher level of security because the private keys can be kept in secure storage, such as a key manager of a hardware security module. More on this in the following section.

A final point to make regarding key-based authentication is that it’s already implemented for a wide range of use cases. Under the hood, common protocols like TLS, SSH, and RDP all use public key cryptography for authentication (and, in some cases, encryption) purposes.

Centrally Secure All Cryptographic Keys

Since private keys don’t need to be (and certainly should not be) shared, all of the private keys can be secured in a centrally-managed HSM or key manager. This is a significant security improvement, as cryptographic keys are often unaccounted for and stored in software on endpoint devices, making it relatively easy for attackers to steal them without detection.

There are several other advantages to storing cryptographic keys in a centrally-managed HSM or key manager. For one, doing so drastically simplifies security administration. The enterprise can set policy, grant and revoke access, monitor key usage, and conduct audits, all from a single interface.

In addition, this approach enables the enterprise to enforce granular security controls whenever a client requests to use a key.

Authenticate Clients When They Request To Use A Key

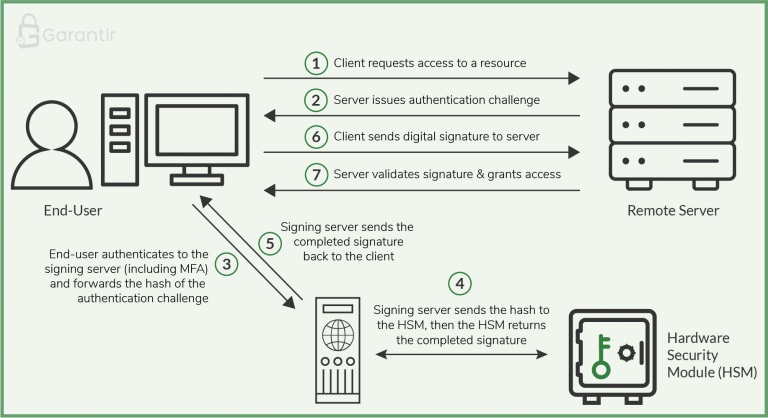

Recall that clients do not need direct access to a private key in order to authenticate to a server. All they need to do is provide a digital signature that was generated with the private key, so the clients don’t even need direct access to the HSM where the private keys are stored.

Using this knowledge, enterprises can deploy a dedicated cryptographic server in front of the HSM to authenticate clients when they request to use a key, and to interface with the HSM to perform cryptographic operations. The authentication process can include a variety of security controls like multi-factor authentication, device authentication, approval workflows, notifications, and more.

When a client needs access to an enterprise resource, they send their request to the server that provides that resource, known as the resource server. The resource server responds by sending the client a challenge that must be signed with the user’s private key. The client forwards this challenge to the cryptographic server.

The cryptographic server then authenticates the end-user according to the policy in place for that particular user and for that particular key. Once the server has authenticated the user, it interfaces with the HSM to generate the digital signature and sends the completed signature to the client. The client forwards the signature to the resource server to finalize authentication. The resource server verifies the signature and provides the end-user access to the resource.

The Result: Enforce Security Controls Without Software Changes

Security controls like multi-factor authentication are typically enforced at the application layer, which requires manual modifications to applications and servers. This might not seem like a major ordeal for one or two servers but when there are thousands of servers to update and maintain, it becomes a real challenge.

When key-based authentication is implemented across the enterprise and all of the cryptographic keys are centrally secured, a range of security controls can be enforced at the transport (or session) layer when users request to use a key.

As a result, controls like MFA and device authentication can be enforced on all enterprise resources and any number of servers without any custom development work. Security policy can be set on a per-key or per-user basis and the controls are enforced before the key is used. As an added benefit, this approach makes it easy to manage access to the keys, monitor key usage, perform audits, and maintain compliance.

Reach out to the Garantir team to learn more about deploying this architecture in your environment.